Thursday, September 18, 2008

Cloud Computing Event

I thought I'd pass this along...my company Chariot Solutions is hosting Fall Forecast 2008 - Computing Among The Clouds this October 17th. The 2008 Fall Forecast conference highlights the emerging areas of cloud computing, software as a service and multi-core technologies. What's most appealing to me about cloud computing is the ability to quickly stand up a computing platform with very little investment (both cost and time). This great solution for working with SOA/Integration products because they can demand significant computing resources.

Tuesday, September 16, 2008

RESTful Web Services using IONA FUSE ESB 4.0 Preview and Spring Dynamic Modules

Roberto Rojas has a very nice blog post on RESTful Web Services using IONA FUSE ESB 4.0 Preview and Spring Dynamic Modules, which I highly recommend reading!

Friday, September 5, 2008

What's new in ServiceMix 4.x?

Recently, I was talking with a client about a new project of theirs based on the latest release FUSE ESB 3.x. That got me thinking, I knew that a FUSE ESB 4.0 Preview / ServiceMix 4.x (SMX4) was near release so in my efforts to keep current (as well as inform our client) I decided to take a closer look. I was able to find pretty much information on SMX4 however, I wasn't able to find a concise write-up that touched on key changes between the versions. So after some research I decided to blog about what I found. In this post I'm only focusing on the major changes with this new version without getting too far into the “weeds”.

So, what's new in SMX4? This is the first question I asked when I heard that a new major release of ServiceMix is on the horizon. Like most people, I pulled down the latest binaries of SMX4 and started going through the documentation. However, first before jumping into SMX4 lets get a quick picture of what the ServiceMix 3.x (SMX3) technology stack looks like so we can get a point of reference.

In SMX3 the JBI 1.0 container is a runtime environment for a Normalized Message Router (NMR) and JBI components (e.g. Service Engines and Binding Components) leveraging Apache ActiveMQ as the Message Broker. Typically an ESB offers at least message routing (e.g. ServiceMix EIP & Apache Camel), transformation (e.g. XSLT) and connectivity (e.g. File, JMS, HTTP, etc.) as does SMX3. Apache CXF is used to provide SOAP support and xml marshaling. From an operations perspective, SMX3 provides JMX-based management for running components and other internals as well as run standalone or embedded in an application server or web container (e.g. JBoss, Tomcat, etc.). There is a lot more that SMX3 has to offer however for this post that's a good picture. One last note on SMX3, version 3.2 and higher requires Java 5.

In SMX4 the technology stack really hasn't changed much. The key technologies are still there like Apache ActiveMQ, Apache CXF, JMX, etc. Apache Camel is the preferred technology for routing however, ServiceMix EIP appears to still be supported. Ok, so what has changed?

Looking at the architecture, the most noticeable change is the separation of the JBI container into a ServiceMix Kernel and a ServiceMix NMR. The ServiceMix Kernel is a lightweight OSGi-based environment deployed on Apache Felix (an OSGi R4 Services Platform implementation). The kernel and NMR are their own projects in Apache and can be downloaded and built separately. However, not to worry, if you download FUSE 4 preview (certified release of SMX4) from IONA you get the kernel and NMR bundled along with other stuff to run the ESB.

Why the change to OSGi?

James Strachan (ServiceMix co-founder and committer) pointed out to me that one of JBI's weakest areas is the cumbersome classloader model and that needed to be addressed. OSGi solves this problem with advanced OSGi bundling and classloading mechanisms. Ok, that sounds good but what is OSGi? Well in a nutshell, it is an environment where bundles (modules) communicate through well-defined services while hiding their internals from other bundles. OSGi also specifies how components are installed and managed. Components can be started, stopped, updated and uninstalled dynamically without bringing down the entire server. This is very beneficial for high availability systems (like an ESB). OSGi bundles are versioned and only bundles that can collaborate are wired together in the same class space. This solves the problem with library dependencies or JAR hell :) There are many more benefits to OSGi and the OSGi Alliance is a great place to start learning. With this change, ServiceMix now becomes an OSGi and JBI-based ESB.

What changed in the NMR?

The obvious change is that the NMR is now it's own Apache project, ServiceMix NMR. So, theoretically you could run different versions of the SeviceMix Kernel and NMR. Unfortunately I don't have a good use case for that although, this separation does provide flexibility. The NMR is now a set of OSGi bundles that can be deployed on any OSGi runtime but mainly built on top of the ServiceMix Kernel. The NMR project is also the JBI 1.0 implementation with plans to support JBI 2.0 in the future. There are a few things missing with ServiceMix NMR 1.0-m1 release (from the release notes):

A few other interesting changes to mention. First, SMX4 is now a standalone only runtime. Previous versions supported embedding in application servers, web containers as well as applications. Flows are no longer used for message exchanges. This confused me a little however, thanks to James he clarified things. Flows are NMR flow types that are the mechanism by which the NMR sends messages from one Binding Component or Service Engine to another. SMX3 has 4 flow types that ended up causing confusion and added complexity for developers. This was simplified in SMX4 with a tendency to use explicit routes by exploiting Camel's routing capabilities.

Last change to note, SMX4 no longer performs transaction specific processing. However, it will pass transaction information along as it would any other message. I was fortunate enough to receive some insight from Guillaume Nodet (Apache Project Management Committee Chair and active committer) regarding the changes in transaction support and it turns out that the release notes are a little misleading. Guillaume explained that previously the NMR was considered as a transactional resource, which means the sending of a JBI exchange through the NMR could be part of the transaction (depending if it was sent synchronously or not). This is no longer the case with SMX4. Transactions are now conveyed along with the exchanges whether they are sent synchronously or asynchronously. As a result, the NMR does not need to be aware of transactions because they are now controlled by the different components instead. The benefits from this change include:

So, what's new in SMX4? This is the first question I asked when I heard that a new major release of ServiceMix is on the horizon. Like most people, I pulled down the latest binaries of SMX4 and started going through the documentation. However, first before jumping into SMX4 lets get a quick picture of what the ServiceMix 3.x (SMX3) technology stack looks like so we can get a point of reference.

In SMX3 the JBI 1.0 container is a runtime environment for a Normalized Message Router (NMR) and JBI components (e.g. Service Engines and Binding Components) leveraging Apache ActiveMQ as the Message Broker. Typically an ESB offers at least message routing (e.g. ServiceMix EIP & Apache Camel), transformation (e.g. XSLT) and connectivity (e.g. File, JMS, HTTP, etc.) as does SMX3. Apache CXF is used to provide SOAP support and xml marshaling. From an operations perspective, SMX3 provides JMX-based management for running components and other internals as well as run standalone or embedded in an application server or web container (e.g. JBoss, Tomcat, etc.). There is a lot more that SMX3 has to offer however for this post that's a good picture. One last note on SMX3, version 3.2 and higher requires Java 5.

In SMX4 the technology stack really hasn't changed much. The key technologies are still there like Apache ActiveMQ, Apache CXF, JMX, etc. Apache Camel is the preferred technology for routing however, ServiceMix EIP appears to still be supported. Ok, so what has changed?

Looking at the architecture, the most noticeable change is the separation of the JBI container into a ServiceMix Kernel and a ServiceMix NMR. The ServiceMix Kernel is a lightweight OSGi-based environment deployed on Apache Felix (an OSGi R4 Services Platform implementation). The kernel and NMR are their own projects in Apache and can be downloaded and built separately. However, not to worry, if you download FUSE 4 preview (certified release of SMX4) from IONA you get the kernel and NMR bundled along with other stuff to run the ESB.

Why the change to OSGi?

James Strachan (ServiceMix co-founder and committer) pointed out to me that one of JBI's weakest areas is the cumbersome classloader model and that needed to be addressed. OSGi solves this problem with advanced OSGi bundling and classloading mechanisms. Ok, that sounds good but what is OSGi? Well in a nutshell, it is an environment where bundles (modules) communicate through well-defined services while hiding their internals from other bundles. OSGi also specifies how components are installed and managed. Components can be started, stopped, updated and uninstalled dynamically without bringing down the entire server. This is very beneficial for high availability systems (like an ESB). OSGi bundles are versioned and only bundles that can collaborate are wired together in the same class space. This solves the problem with library dependencies or JAR hell :) There are many more benefits to OSGi and the OSGi Alliance is a great place to start learning. With this change, ServiceMix now becomes an OSGi and JBI-based ESB.

What changed in the NMR?

The obvious change is that the NMR is now it's own Apache project, ServiceMix NMR. So, theoretically you could run different versions of the SeviceMix Kernel and NMR. Unfortunately I don't have a good use case for that although, this separation does provide flexibility. The NMR is now a set of OSGi bundles that can be deployed on any OSGi runtime but mainly built on top of the ServiceMix Kernel. The NMR project is also the JBI 1.0 implementation with plans to support JBI 2.0 in the future. There are a few things missing with ServiceMix NMR 1.0-m1 release (from the release notes):

- no support for JMX deployment and ant tasks

- no support for Service Assemblies Connections

- no support for transactions (a transaction manager and a naming context can be injected into components if they are available as OSGi services, but not transaction processing - suspend / resume - will be performed, as it would be requested for real support)...more on this a little later.

A few other interesting changes to mention. First, SMX4 is now a standalone only runtime. Previous versions supported embedding in application servers, web containers as well as applications. Flows are no longer used for message exchanges. This confused me a little however, thanks to James he clarified things. Flows are NMR flow types that are the mechanism by which the NMR sends messages from one Binding Component or Service Engine to another. SMX3 has 4 flow types that ended up causing confusion and added complexity for developers. This was simplified in SMX4 with a tendency to use explicit routes by exploiting Camel's routing capabilities.

Last change to note, SMX4 no longer performs transaction specific processing. However, it will pass transaction information along as it would any other message. I was fortunate enough to receive some insight from Guillaume Nodet (Apache Project Management Committee Chair and active committer) regarding the changes in transaction support and it turns out that the release notes are a little misleading. Guillaume

- Improved scalability of ServiceMix when using transactions because all JBI exchanges are now sent asynchronously.

- Improved interoperability as the other JBI containers tend to follow the same pattern when/if they support transactions.

Wednesday, May 28, 2008

Building a “SOApp” with Mule (Open Source ESB)

Ok, I know that we're all up to our ears with technical acronyms, terms, buzzwords, etc. that all appear to have some marketing spin to them in hope that they will eventually work their way into industry acceptance. So, why should this be any different...just kidding, kind of :) Anyway, bear with me through this first paragraph and you'll understand how this “SOApp” thing came into existence in my little world. In 2007, I was fortunate enough to be part of a development team at Chariot Solutions responsible for designing and developing a product for the Material Handling and Distribution Industry. After a few requirements gathering sessions with the client, we discovered that the project had a number of integration points with new and existing systems...the age old integration challenge. The existing systems ranged from a COTS warehouse management system to a C-based controller that interfaced with physical hardware devices (e.g. lights, switches, buttons, etc.). Steve Smith (Chariot Architect) was involved early on in the initial discovery sessions and was quick to point out that all these integration points (files, database tables, TCP messages, etc.) we were faced with are very similar to challenges faced while implementing an SOA but on a much smaller scale. So, why not take advantage of the benefits that an ESB provides (i.e. protocol translation, message transformation and routing) to speed development and deliver a maintainable solution? Hence the “SOApp” concept was born. Well, the term “SOApp” (Service Oriented Application) was a play on the SOA acronym about 3 months ago when I was considering writing up a post (this post) to discuss a unique way of using ESB technology. A close second was “Save Our A...”, well you get the picture, anyway, back to the interesting technical stuff.

Ok, so we had a conceptual architecture centered around an ESB. The team was all in agreement that we would benefit from NOT having to write custom integration code and simply focus on developing business logic in POJOs. The decision to use Mule (v. 1.4.1) for the ESB was an easy choice for us because we had plenty of experience using it in our SOA Lab. There were many interesting aspects of the project however, this post focuses on how we dealt with the various integration challenges. Let's take a closer look. The diagram below illustrates what the architecture looks like with a focus on various system interfaces.

Warehouse Management System (WMS) – File-based Interface

The WMS generated flat files in a proprietary format on some random schedule. This data is essential to the overall operation of the entire system. These flat files needed to be processed as soon as the would appear in a directory. A Mule file connector was configured to poll a directory, pickup a file, pass the data onto a POJO for processing and eventually update the PostgreSQL database via Hibernate. Nothing fancy here just basic file processing.

PC Controller – TCP Sockets Interface

This controller serves as a central integration point for various switches, sensors, etc. A basic use case was when a sensor was triggered, the controller would send a proprietary delimited length text message was sent to a particular POJO handling that transaction in Mule. The POJO would typically query the PostgreSQL database and return the resulting data back to the controller. There was a non-functional requirement for the entire transaction to take less 200 ms. At first, we used a Mule TCP connector to communicate (both inbound and outbound) with the controller however, due to some limitations on the controller side (and time constraints) we had to switch outbound communications to custom socket code. Here was a case where we only benefited from using Mule's connectors for one communications direction however, since the socket code was easy enough to write it didn't take long to develop a workaround. Oh, the transaction performance ended up to be less than 100ms on average.

Pick-To-Light Controller – JDBC Connector Interface

This controller was a java-based controller that interfaced physical devices via the PostgreSQL database. Though this is not the “ideal” method for interfacing it was a constraint that we had to deal with. What was interesting about this interface is that we configured a Mule JDBC connector to process incoming requests when information appeared in the database tables. The POJOs that processed the data then used our Hibernate persistence layer to respond back to the controller via database tables.

Java Swing Clients – MuleClient / TCP Interface

There were two Java Swing clients that we developed for administrators and end users to interface with the system. These swing clients used the MuleClient to simplify the TCP communications with Mule. Each POJO was considered a “service” with a unique endpoint deployed in Mule. What is interesting about this is that the swing client only had to specify a single uri (e.g. tcp://localhost:) along with a payload and let the MuleClient handle the rest. There was no need to identify a specific endpoint in the URI, which made this design very flexible and maintainable. Here we leveraged the content-based routing capabilities of Mule by configuring various FilteringOutboundRouter's to call internal POJOs for processing. The outbound routers inspect the payload type and routed accordingly based on configuration.

CLI (Command Line Interface) Java Application – Telnet & MuleClient / TCP Interface

Well I saved the most interesting interface for last, IMHO :) One of the requirements was to have a handheld Windows CE device, capable of scanning barcodes, perform CRUD operations on the PostgreSQL database over a wireless 802.11b network. We had part of this problem solved by using the MuleClient to communicate with our POJOs deployed in Mule. Ok, so, how do we implement the MuleClient on a Windows CE device? Well, John Shepard (Chariot Architect) came up with a clever design to use Telnet and a basic Java application to solve the problem. This was a great example of leveraging proven technology along with a creative way of configuring Unix accounts. The end user accounts were configured to start the CLI java application after a successful login to the Unix machine instead of a bash shell. This work very well and performed beautifully. There was no need to install and manage client applications or worry about performance of the handheld device. The built-in Telnet capability of the Windows CE device was all that was needed on the client. This was very cool to see in action especially knowing that only a basic java application was behind it.

The purpose of this post was to give you an overview of how to use an ESB in new and creative ways aside from their original intent. In the future, I may expand more on these interfaces to provide more detail. There were a number of other interesting aspects to the project like using annotations and XDoclet to automatically generate the Mule configuration, etc. that is good material for future posts. Wrapping this up, we did take on some risk of using Mule in this capacity, however, luckily there were no major problems we ran into. Besides we always had Java to fall back on if Mule came up short anywhere. Could this be the beginning of a new movement in application development...the “SOApp”? Maybe, however I can already hear the snickering of my colleges as they read the end of this post :)

Ok, so we had a conceptual architecture centered around an ESB. The team was all in agreement that we would benefit from NOT having to write custom integration code and simply focus on developing business logic in POJOs. The decision to use Mule (v. 1.4.1) for the ESB was an easy choice for us because we had plenty of experience using it in our SOA Lab. There were many interesting aspects of the project however, this post focuses on how we dealt with the various integration challenges. Let's take a closer look. The diagram below illustrates what the architecture looks like with a focus on various system interfaces.

Warehouse Management System (WMS) – File-based Interface

The WMS generated flat files in a proprietary format on some random schedule. This data is essential to the overall operation of the entire system. These flat files needed to be processed as soon as the would appear in a directory. A Mule file connector was configured to poll a directory, pickup a file, pass the data onto a POJO for processing and eventually update the PostgreSQL database via Hibernate. Nothing fancy here just basic file processing.

PC Controller – TCP Sockets Interface

This controller serves as a central integration point for various switches, sensors, etc. A basic use case was when a sensor was triggered, the controller would send a proprietary delimited length text message was sent to a particular POJO handling that transaction in Mule. The POJO would typically query the PostgreSQL database and return the resulting data back to the controller. There was a non-functional requirement for the entire transaction to take less 200 ms. At first, we used a Mule TCP connector to communicate (both inbound and outbound) with the controller however, due to some limitations on the controller side (and time constraints) we had to switch outbound communications to custom socket code. Here was a case where we only benefited from using Mule's connectors for one communications direction however, since the socket code was easy enough to write it didn't take long to develop a workaround. Oh, the transaction performance ended up to be less than 100ms on average.

Pick-To-Light Controller – JDBC Connector Interface

This controller was a java-based controller that interfaced physical devices via the PostgreSQL database. Though this is not the “ideal” method for interfacing it was a constraint that we had to deal with. What was interesting about this interface is that we configured a Mule JDBC connector to process incoming requests when information appeared in the database tables. The POJOs that processed the data then used our Hibernate persistence layer to respond back to the controller via database tables.

Java Swing Clients – MuleClient / TCP Interface

There were two Java Swing clients that we developed for administrators and end users to interface with the system. These swing clients used the MuleClient to simplify the TCP communications with Mule. Each POJO was considered a “service” with a unique endpoint deployed in Mule. What is interesting about this is that the swing client only had to specify a single uri (e.g. tcp://localhost:

CLI (Command Line Interface) Java Application – Telnet & MuleClient / TCP Interface

Well I saved the most interesting interface for last, IMHO :) One of the requirements was to have a handheld Windows CE device, capable of scanning barcodes, perform CRUD operations on the PostgreSQL database over a wireless 802.11b network. We had part of this problem solved by using the MuleClient to communicate with our POJOs deployed in Mule. Ok, so, how do we implement the MuleClient on a Windows CE device? Well, John Shepard (Chariot Architect) came up with a clever design to use Telnet and a basic Java application to solve the problem. This was a great example of leveraging proven technology along with a creative way of configuring Unix accounts. The end user accounts were configured to start the CLI java application after a successful login to the Unix machine instead of a bash shell. This work very well and performed beautifully. There was no need to install and manage client applications or worry about performance of the handheld device. The built-in Telnet capability of the Windows CE device was all that was needed on the client. This was very cool to see in action especially knowing that only a basic java application was behind it.

The purpose of this post was to give you an overview of how to use an ESB in new and creative ways aside from their original intent. In the future, I may expand more on these interfaces to provide more detail. There were a number of other interesting aspects to the project like using annotations and XDoclet to automatically generate the Mule configuration, etc. that is good material for future posts. Wrapping this up, we did take on some risk of using Mule in this capacity, however, luckily there were no major problems we ran into. Besides we always had Java to fall back on if Mule came up short anywhere. Could this be the beginning of a new movement in application development...the “SOApp”? Maybe, however I can already hear the snickering of my colleges as they read the end of this post :)

Thursday, January 10, 2008

Use JConsole with ActiveMQ for a quick JMS test client

As I was working with OpenESB to try an integrate it with ActiveMQ I stumbled across a quick and easy way work with ActiveMQ. My needs were basic and I just wanted to be able to browse, add and remove messages from a set of queues. I knew that ActiveMQ has JMX support so I wondered if my basic needs could be met using JConsole. Sure enough they were. Here's what I did:

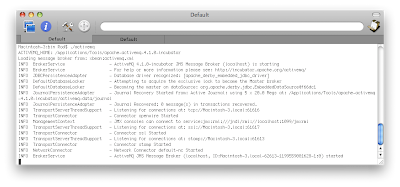

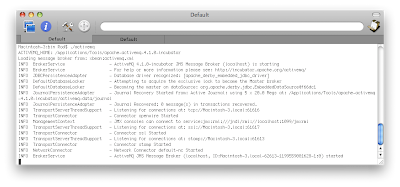

First, I configured ActiveMQ with the queues I needed and started the server (console output below).

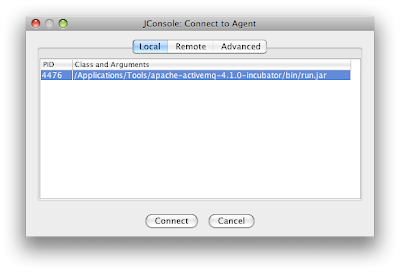

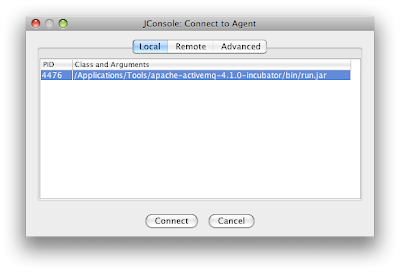

The JMX service connection uri is part of the ActiveMQ startup output (see the last line beginning with INFO ManagementContext). After the server is running you then can start up JConsole by typing jconsole at a terminal/command prompt. The ActiveMQ JMX agent should be displayed in the local tab of the JConsole: Connect to Agent dialog box. If not, you can enter in the management context uri (e.g. service:jmx:rmi:///jndi/rmi://localhost:1099/jmxrmi)

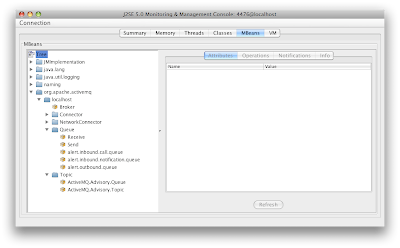

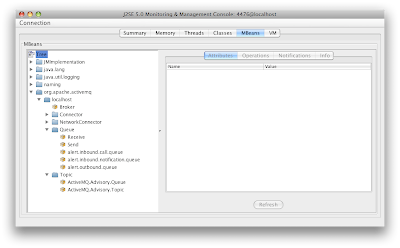

After connecting you will be presented with a Summary screen showing a snapshot of ActiveMQ. From here simply click on the MBeans tab and expand the org.apache.activemq node to see the queues and topics configured in ActiveMQ.

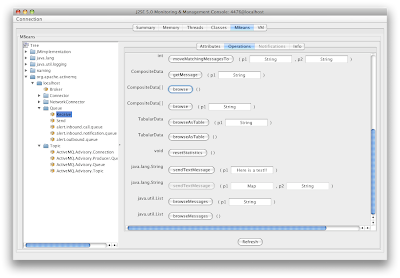

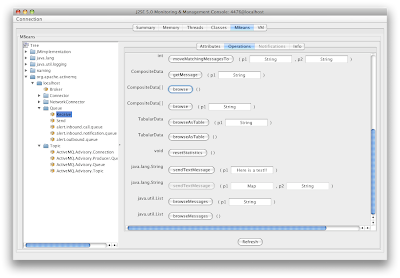

Ok, now we're able perform as many MBean operations that are available. When you're unit testing a JMS service (or application) the first thing you typically do is place a message on a queue. You're probably saying "well duh?" and that's what I'd say. The funny thing is that I find myself spending more time googling for a tool or writing/modifying sample test code to do this simple task. You'll be amazed at how easy it is through jconsole to place a text message on a queue. Again, select the queue you want to put a message on and then on the Operations tab in jconsole and scroll down to the operation sendTextMessage. Fill in the parameter with some text and click the sendTextMessage button.

You should see a dialog box pop up with the operation return and a message ID. Congratulations you just put a text message on a queue. You can now experiment with some of the operations like browse, purge, etc. There you have it...a simply easy to use JMS test client.

It's very easy to find the queues and topics in the MBeans tree with a stand-alone instance of ActiveMQ. However, it gets very difficult to even find the ActiveMQ node when you attach to an application server (e.g. Geronimo) that comes bundled with ActiveMQ. I don't have a good answer for that yet.

Happy messaging :)

First, I configured ActiveMQ with the queues I needed and started the server (console output below).

The JMX service connection uri is part of the ActiveMQ startup output (see the last line beginning with INFO ManagementContext). After the server is running you then can start up JConsole by typing jconsole at a terminal/command prompt. The ActiveMQ JMX agent should be displayed in the local tab of the JConsole: Connect to Agent dialog box. If not, you can enter in the management context uri (e.g. service:jmx:rmi:///jndi/rmi://localhost:1099/jmxrmi)

After connecting you will be presented with a Summary screen showing a snapshot of ActiveMQ. From here simply click on the MBeans tab and expand the org.apache.activemq node to see the queues and topics configured in ActiveMQ.

Ok, now we're able perform as many MBean operations that are available. When you're unit testing a JMS service (or application) the first thing you typically do is place a message on a queue. You're probably saying "well duh?" and that's what I'd say. The funny thing is that I find myself spending more time googling for a tool or writing/modifying sample test code to do this simple task. You'll be amazed at how easy it is through jconsole to place a text message on a queue. Again, select the queue you want to put a message on and then on the Operations tab in jconsole and scroll down to the operation sendTextMessage. Fill in the parameter with some text and click the sendTextMessage button.

You should see a dialog box pop up with the operation return and a message ID. Congratulations you just put a text message on a queue. You can now experiment with some of the operations like browse, purge, etc. There you have it...a simply easy to use JMS test client.

It's very easy to find the queues and topics in the MBeans tree with a stand-alone instance of ActiveMQ. However, it gets very difficult to even find the ActiveMQ node when you attach to an application server (e.g. Geronimo) that comes bundled with ActiveMQ. I don't have a good answer for that yet.

Happy messaging :)

Saturday, January 5, 2008

It's been a long time!

Just a quick post to let you know that this blog isn't dead. I know, it has been a long time since my last post. The truth is that I've been involved with a major SOA implementation (using BEA tools) since September 2007. This project is taking up most of my time and unfortunately my blog is feeling the affects :). I hope to get back in the lab soon however, in the meantime I'll be making some random SOA related posts that I hope you'll find useful.

Oh, btw...Chariot Solutions is hosting its annual Emerging Technologies conference in March (http://www.phillyemergingtech.com/). This year there will be an SOA track along with various others. Take a look and hope to see you there!

Oh, btw...Chariot Solutions is hosting its annual Emerging Technologies conference in March (http://www.phillyemergingtech.com/). This year there will be an SOA track along with various others. Take a look and hope to see you there!

Subscribe to:

Comments (Atom)